Artificial intelligence has created

tremendous opportunity for innovation, and responsible practices to

support it are more important than ever.

The following is an opinion editorial from Lama Nachman, Intel

Fellow and director of the Intelligent Systems Research Lab at

Intel Labs.

This press release features multimedia. View

the full release here:

https://www.businesswire.com/news/home/20240402656092/en/

Lama Nachman, Intel Fellow and director

of the Intelligent Systems Research Lab at Intel Labs, writes that

responsible AI practices are more important than ever.

(Credit: Intel Corporation)

I've always admired Intel's ability to anticipate how future

technology might ignite social change. That's why in 2017, even

before AI was as prevalent as it is now, we launched our

responsible AI (RAI) program. Since then, we have seen how AI and,

more specifically, deep learning have made significant progress in

advancing many fields, including healthcare, financial services and

manufacturing.

More: The Four Pillars of Responsible AI Development

(Video) | Artificial Intelligence at Intel (Press Kit)

We also have seen how the rapid advancement of large language

models (LLMs) and increased access to generative AI applications

have changed the world. Today, powerful AI tools are accessible to

people without formal AI skills. This has enabled people around the

world to discover and use AI capabilities at scale, improving how

they work, learn and play. While this has created tremendous

opportunity for innovation, there has also been an increased

concern around misuse, safety, bias and misinformation.

For all these reasons, responsible AI practices are more

important than ever.

We at Intel believe that responsible development must be the

foundation of innovation throughout the AI life cycle to ensure AI

is built, deployed and used in a safe, sustainable and ethical way.

As AI continues to rapidly evolve, so do our RAI efforts.

Internal and External Governance

A key part of our RAI strategy is using rigorous,

multidisciplinary review processes throughout the AI life cycle.

Internally, Intel’s advisory councils review various AI development

activities through the lens of grounding principles:

- Respect human rights

- Enable human oversight

- Enable transparency and explainability

- Advance security, safety and reliability

- Design for privacy

- Promote equity and inclusion

- Protect the environment

Much has changed with the rapid expansion of generative AI, and

we’ve changed with it. From developing standing guidance on safer

internal deployments of LLMs, to researching and developing a

taxonomy of the specific ways that generative AI can lead people

astray in real-world situations, we are working hard to stay ahead

of the risks.

With the expansion of generative AI have also come growing

concerns about the environmental impact of AI, so we have added

“protect the environment” as a new grounding principle, consistent

with Intel’s broader environmental stewardship commitments. While

there is nothing simple about addressing this complex area,

responsible AI has never been about simplicity. In 2017, we

committed ourselves to addressing bias even as methods were still

being developed to tackle it.

Research and Collaboration

Despite the great progress that has been made in responsible AI,

it is still a nascent field. We must continue to advance the state

of the art, especially given the increased complexity and capacity

of the latest models. At Intel Labs, we focus on key research areas

including privacy, security, safety, human/AI collaboration,

misinformation, AI sustainability, explainability and

transparency.

We also collaborate with academic institutions worldwide to

amplify the impact of our work. Recently we established the Intel

Center of Excellence on Responsible Human-AI Systems (RESUMAIS).

The multiyear effort brings together four leading research

institutions: in Spain, the European Laboratory for Learning and

Intelligent Systems (ELLIS) Alicante; and in Germany, DFKI, the

German Research Center for Artificial Intelligence, the FZI

Research Center for Information Technology and Leibniz Universität

Hannover. RESUMAIS aims to foster the ethical and user-centric

development of AI, focusing on issues such as fairness, human/AI

collaboration, accountability and transparency.

We also continue to create and participate in several alliances

across the ecosystem to come up with solutions, standards and

benchmarks to address the new and complex issues relating to RAI.

Our engagement in the MLCommons® AI Safety Working Group, the AI

Alliance, Partnership on AI working groups, Business Roundtable on

Human Rights and AI and other multistakeholder initiatives have

been instrumental in moving this work forward – not just as a

company, but as an industry.

Inclusive AI/Bringing AI Everywhere

Intel believes that responsibly bringing “AI Everywhere” is key

to the collective advancement of business and society. This belief

is the foundation of Intel’s digital readiness programming, working

to provide access to AI skills to everyone, regardless of location,

ethnicity, gender or background.

We were proud to expand our AI for Youth and Workforce programs

to include curriculum around applied ethics and environmental

sustainability. Additionally, at Intel’s third-annual AI Global

Impact Festival, winners’ projects went through an ethics audit

inspired by Intel’s multidisciplinary process. The festival

platform also featured a lesson in which more than 4,500 students

earned certifications in responsible AI skills. And, for the first

time, awards were given to project teams that delivered innovative

accessibility solutions using AI.

Looking Ahead

We are expanding our efforts to comprehend and mitigate the

unique risks created by the massive expansion of generative AI and

to develop innovative approaches to address safety, security,

transparency and trust. We are also working with our Supply Chain

Responsibility organization to accelerate progress addressing the

human rights of AI global data enrichment workers (i.e., people who

make AI datasets usable through labeling, cleaning, annotation or

validation). Addressing this critical issue will require

industrywide efforts, and we’re leveraging our two decades of

experience tackling issues like responsible sourcing and forced

labor to help move the global ecosystem forward.

Across responsible AI, we are committed to learning about new

approaches, collaborating with industry partners and continuing our

work. Only then can we truly unlock the potential and benefits of

AI.

Lama Nachman is an Intel Fellow and director of the Intelligent

Systems Research Lab at Intel Labs.

About Intel

Intel (Nasdaq: INTC) is an industry leader, creating

world-changing technology that enables global progress and enriches

lives. Inspired by Moore’s Law, we continuously work to advance the

design and manufacturing of semiconductors to help address our

customers’ greatest challenges. By embedding intelligence in the

cloud, network, edge and every kind of computing device, we unleash

the potential of data to transform business and society for the

better. To learn more about Intel’s innovations, go to

newsroom.intel.com and intel.com.

© Intel Corporation. Intel, the Intel logo and other Intel marks

are trademarks of Intel Corporation or its subsidiaries. Other

names and brands may be claimed as the property of others.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20240402656092/en/

Orly Shapiro 1-949-231-0897 orly.shapiro@intel.com

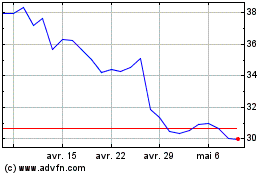

Intel (NASDAQ:INTC)

Graphique Historique de l'Action

De Juin 2024 à Juil 2024

Intel (NASDAQ:INTC)

Graphique Historique de l'Action

De Juil 2023 à Juil 2024